Turn Your Datacenter into an AI Factory

Production LLM inference on your infrastructure — OpenAI-compatible APIs, optimized hardware, full data sovereignty.

The Challenge

From GPU Capacity to AI Revenue

Many datacenters have GPU capacity and growing demand. What's missing is a reliable way to turn that capacity into a sellable service with predictable performance and economics.

Revenue Gap

GPU capacity alone does not translate into revenue. Without the right serving stack and pricing model, utilization stays uneven and margins remain unclear.

Operational Complexity

Production workloads require low latency, high concurrency, and predictable behavior. Ad-hoc deployments and generic stacks struggle under real production traffic.

Loss of Control

Many providers sell access to their own infrastructure. Datacenters lose control over pricing, branding, and customer relationships.

CloudRift helps datacenter operators close this gap

By combining production software, benchmark-driven hardware guidance, and operational experience from running real datacenters at scale.

The Platform

Run Enterprise LLMs on Your Own GPUs

CloudRift enables organizations to offer production-grade LLM-as-a-service, running on their own hardware and delivered through enterprise-ready APIs.

Production LLM-as-a-Service

OpenAI-compatible APIs, streaming support, and usage-based billing — designed for real production workloads.

import openai client = openai.OpenAI( api_key="YOUR_RIFT_API_KEY", base_url="https://inference.cloudrift.ai/v1" ) completion = client.chat.completions.create( model="qwen/qwen3.5-35b-a3b", messages=[ {"role": "user", "content": "Hello"} ], stream=True ) for chunk in completion: print(chunk.choices[0].delta.content or "", end="")

Data-Driven Hardware Decisions

Real-world benchmarks and performance data guide GPU selection, configuration, and deployment strategy for your workloads.

Built by Experts

Designed and operated by teams with deep experience in model optimization, datacenter operations, and production inference at scale.

Process

How It Works

Step 1

Benchmark & Plan

Benchmark workloads across GPUs and configurations to define performance targets and hardware requirements.

Step 2

Deploy & Configure

Deploy a production stack optimized for throughput, latency, and concurrency with pre-built templates.

Step 3

Monitor & Optimize

Track performance metrics and fine-tune your infrastructure in real time.

Step 4

Scale on Demand

Deliver via OpenAI-compatible APIs with streaming and usage-based billing that scales with your workload.

Step 5

Collaborate & Share

Connect with trusted build partners for hardware procurement, financing, and benchmark-driven specifications.

Step 6

Iterate & Improve

Monitor performance, adjust configurations, and optimize cost and utilization over time.

Data-Driven

Benchmark-Driven Hardware Guidance

Inference performance depends heavily on hardware choice and configuration. CloudRift runs regular benchmarks across GPUs, models, and serving setups to understand real-world tradeoffs.

Why Our Benchmarks Matter

Synthetic specs do not reflect real production behavior. We measure complete serving paths under realistic load.

What We Benchmark

Models, GPU generations, throughput, latency, concurrency, cost efficiency, context lengths, parallelization parameters, and inference frameworks.

How Operators Use This Data

Operators use this data to choose hardware, tune deployments, and define pricing models for their inference services.

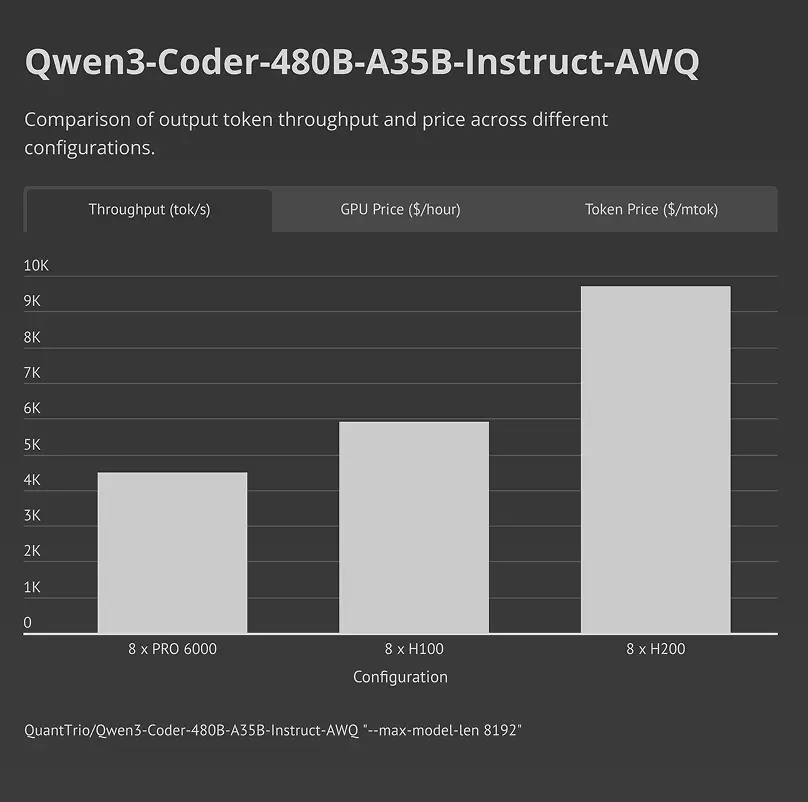

Real-world benchmark: Qwen3-Coder-480B-A35B-Instruct-AWQ tested on 8x RTX Pro 6000, 8x H100, and 8x H200 GPU configurations

Hardware

Inference Accelerators and Hardware Optimization

Some workloads require more than standard GPU configurations. CloudRift supports deeper hardware and system-level optimizations for demanding use cases.

Accelerator Options

For select deployments, we support specialized accelerators designed to improve throughput and reduce latency by optimizing memory access and model serving paths.

- Faster KV cache access

- Reduced memory bottlenecks

- Better performance under high concurrency

System and Rack-Level Optimization

Beyond individual components, CloudRift helps operators optimize their systems, including GPU density, storage layout, and network configuration.

- Hardware-aware serving configurations

- Balanced GPU, memory, and I/O

- Designs aligned with benchmark data

Use Cases

Enterprise Use Cases

CloudRift supports workloads with strict requirements around latency, throughput, and reliability.

Telecom and Network Services

Inference workloads supporting customer interaction, network optimization, and real-time decision systems. These use cases prioritize predictable latency and high concurrency across distributed environments.

Large-Scale AI Applications

Customer-facing AI systems such as chat interfaces, content generation, or search augmentation. These workloads demand throughput, reliability, and efficient scaling under peak traffic.

Banks and Financial Services

Workloads for fraud detection, customer service automation, document processing, and risk analysis. These use cases demand high reliability, data security, and regulatory compliance with predictable performance.

Internal Enterprise AI Systems

AI models used internally for automation, analytics, or support tooling, where reliability and data locality matter as much as performance.

Why CloudRift

Why Datacenter Operators Choose CloudRift

Infrastructure Ownership

Everything runs on your hardware, in your datacenters. You control capacity, deployment models, and physical constraints.

Customer & Pricing Control

You set pricing, billing models, and customer relationships. CloudRift does not sit between you and your customers.

Commercial Model

Designed for operators who want to offer AI as a service, not enterprises looking to consume someone else's cloud.

Operational Depth

CloudRift is built by teams who manage datacenters at scale. We design inference systems with operational realities in mind, not just API performance.

Hardware Flexibility

We support multiple GPU generations and configurations and help select hardware based on real benchmarks, not fixed product tiers.

Our Team

Years of Experience in Model Deployment & Optimization

Our team has deep expertise in production inference workloads, from deployment to optimization at scale.

Animoji

Real-time facial tracking

ARKit

Spatial computing

Hand Tracking

Vision Pro gestures

Portrait Mode

Depth sensing & bokeh

Roblox Face Tracking

Live avatar animation

.webp&w=3840&q=75)

Room Plan

3D space scanning

AI Texture Generation

Procedural asset creation

Hand Tracking

Gesture recognition

Ready to turn your datacenter into an AI factory?

Talk to our team about deploying production inference on your infrastructure.

FAQ

Common Questions About Inference

Contact Us

Let us know if you're looking to:

- Find an affordable GPU provider

- Sell your compute online

- Manage on-prem infrastructure

- Build a hybrid cloud solution

- Optimize your AI deployment

PO Box 1224, Santa Clara, CA 95052, USA

+1 (831) 534-3437

I'm interested in: